World-Class Expertise in Formal Methods and AI

Prasenjit Dey

Emergence AI

Leading the lab's research agenda in verification-native autonomous systems, bridging cutting-edge AI research with formal verification methods for mission-critical applications.

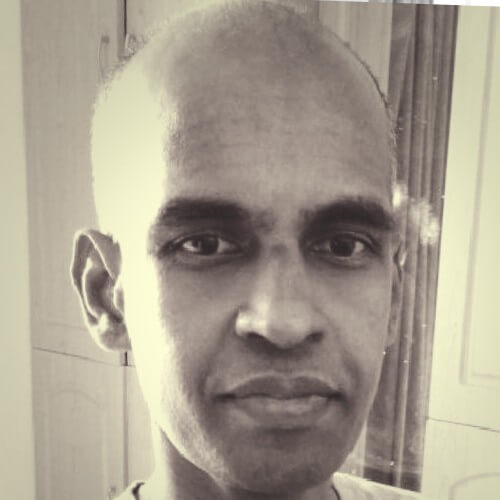

Siddhartha Gadgil

Indian Institute of Science (IISc)

Mathematician and pioneer in automated theorem proving and formal verification. Leading research in applying proof assistants to AI systems.

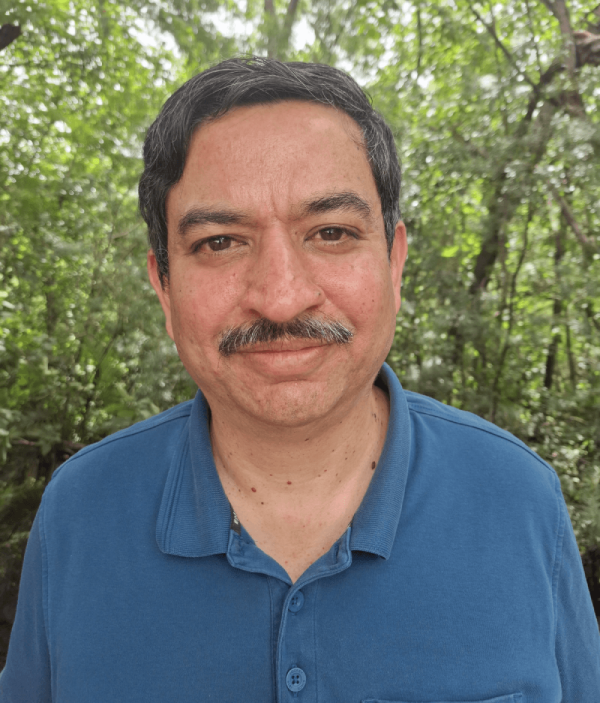

Senthil Kandasamy

Emergence AI

Building trustworthy AI for science through agentic systems and domain-native verification, from computational biophysics to regulated fields like pharma and biomedical simulation